Trust game. How to create your own DeepFake in 5 minutes

In this article, we’ll talk about how to make a professional DeepFake without writing a few hundred lines of code.

If you are reading this article, then most likely you have already heard about the artificial intelligence algorithm called DeepFake. Today, deepfakes are used almost everywhere: from cinema to porn videos. A recent study showed that 96% of deepfakes on the Internet are actually porn videos. In most cases, users create fake celebrity porn videos or use technology for revenge porn.

In addition to pornography, the technology is also used in politics, the creation of fake news and various kinds of deceptions. There are many similar videos on the Internet with various political figures, in one of them, for example, Obama called Trump a complete dipshit (an asshole). In April 2018, BuzzFeed showed how far deep video forgery has come by combining the face of Barack Obama and the compelling voice of Jordan Peele.

BuzzFeed Deepfake Example

However, technology is used not only to the detriment, but also to the benefit of society. For example, in the Salvador Dali Museum in Florida, in honor of the 115th anniversary of the famous artist, a special exhibition Dalí Lives (“Dali is alive") was organized. The project curators used an AI-generated prototype of the artist, who communicated with museum visitors, telling them stories about his paintings and life.

But you don’t need to be a skilled developer to create your own deepfake. All you need is a regular photo that you want to animate and a video of your favorite artist or the one whose movements you want to imitate.

To simulate the process described above, we will implement image animation, which is possible with the help of neural networks that make the image move in the video sequence you choose. After reading this article to the end, you will understand that you can animate any photo without writing a single line of code.

How it works?

Deepfakes are based on generative adversarial neural networks (GANs). These are algorithms based on machine learning that can generate new content from a given set. For example, GAN can study a thousand photographs of Barack Obama and create his own, preserving all the features and facial expressions of the ex-president.

We will use the model introduced in " First Order Motion Model for Image Animation ", which is a new approach to replacing an object in a video with another image without specifying any additional information and writing additional code.

Before building a video sequence, it is very important to understand exactly how to do it.

When using this model, the neural network helps to reconstruct the video, where the original subject is replaced by another object located in the original image. During testing, the program tries to predict how the object in the original image will move, based on the added video. Thus, every smallest movement presented on the video is tracked, starting with the turn of the head and ending with the movement of the corners of the lips.

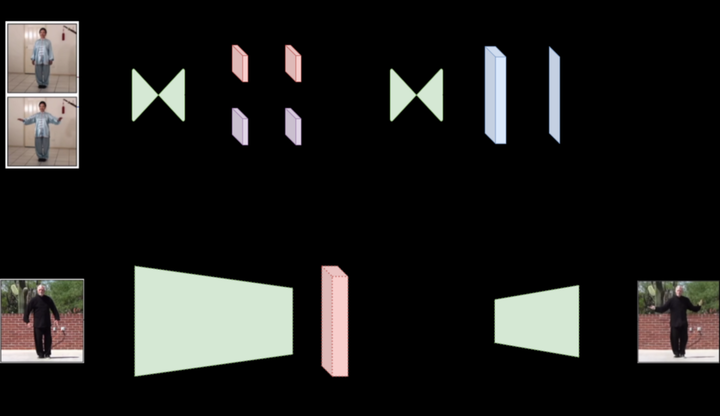

The process of creating DeepFake

Testing is carried out on a large number of videos. To reconstruct the video, the model extracts several frames and tries to learn the patterns of the movements performed. By analyzing the extracted information, she learns to encode the movement as a mixture of keypoint movements specific to it and its own affine transformations.

During testing, the model reconstructs the video sequence by adding an object from the original image to each frame of the video, and therefore animates it.

The framework is implemented through the use of a motion evaluation module and an image generation module.

The purpose of the movement assessment module is to understand exactly how they are performed (” latent movement representation “). Simply put, it tries to keep track of the movements in their sequence and encode them to move key points and record local affine transformations. The result is a dense field of motion and an occlusal mask that work together. The mask determines which parts of the object, moving in a certain sequence, should be replaced by the original image (for example, the lower part of the face).

For example, in this GIF, the lady’s back is not animated.

Finally, the data received by the motion estimation module is sent to the image generation module along with the original image and the selected video file. The image generator creates frames of moving video with the original image object replaced. The frames are joined together to subsequently create a new video.

Creation of DeepFakes

You can easily find the source code on Github, clone it on your own machine and run everything there, however, there is an easier way that allows you to get a ready-made video in just 5 minutes.

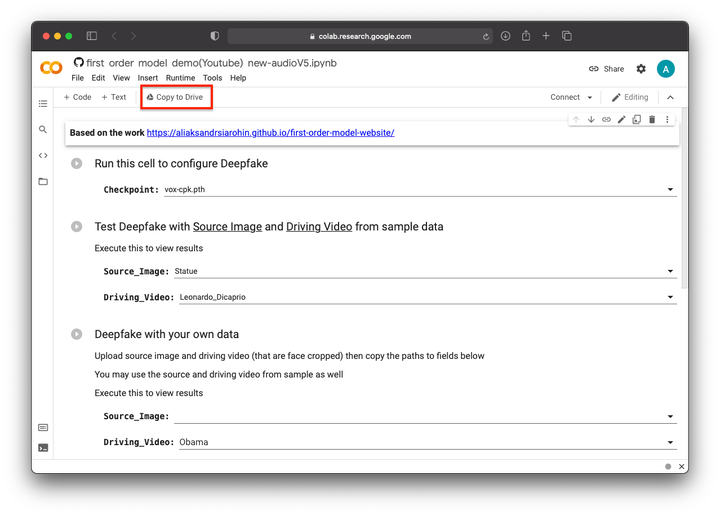

- Follow the link: https://colab.research.google.com/github/AwaleSajil/DeepFake_1/blob/master/first_order_model_demo(Youtube)_new_audioV5_a.ipynb

- Create a copy of the ipynb file in your Google drive.

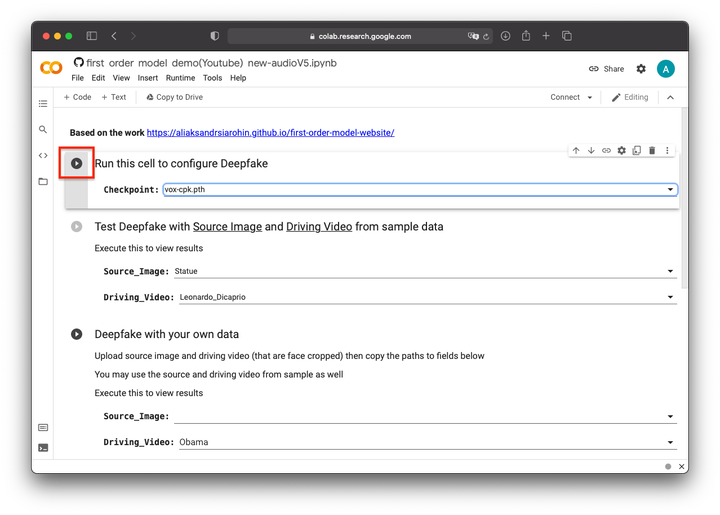

3 Run the first process to download all required resources and set model parameters.

4 Then you can test the algorithm using a pre-prepared collection of videos and photos. Just select a source image from the collection and the video you want to project onto that image. After a couple of minutes, you will have a ready-made deepfake in your hands.

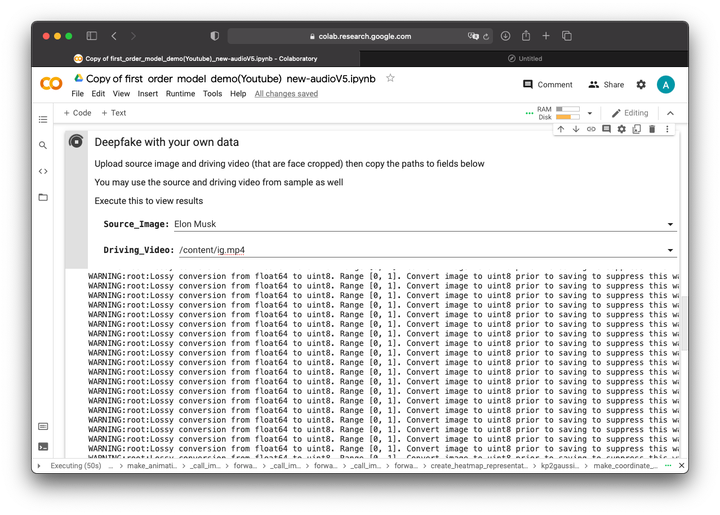

5 To create your own video, enter the path to the original image and the motion video in the third cell. You can download them directly to the folder with the model, which can be opened by clicking on the menu folder icon on the left. It is important that your video is cropped to the face and is in mp4 format. You can also use the examples from the collection in this section.

As a result, by combining the video with Ivangai and the photo of Elon Musk, we managed to get the following deepfake